Copyright © 2015-2023 Standard Performance Evaluation Corporation

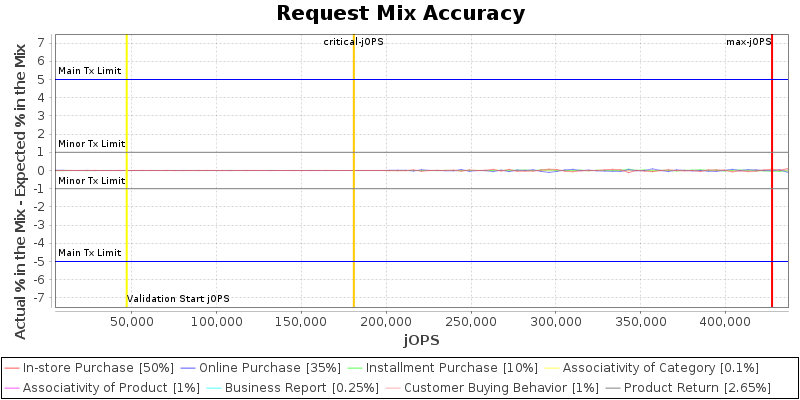

| Lenovo Global Technology ThinkSystem SR655 V3 | 427457 SPECjbb2015-Distributed max-jOPS 180880 SPECjbb2015-Distributed critical-jOPS |

||

| Tested by: Lenovo Global Technology | Test Sponsor: Lenovo Global Technology | Test location: Beijing, China | Test date: May 12, 2023 |

| SPEC license #: 9017 | Hardware Availability: Aug-2023 | Software Availability: Apr-2023 | Publication: Wed Jul 5 10:41:44 EDT 2023 |

|

SPECjbb2015-Distributed: Distributed JVMs/Single or Multi Hosts

(# of groups: 16) |

|

|

|

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

||||||||||||||||||||||||||||||||||||

|

|

||||||||||||||||||||||||||||||||||||||||||||||||

|

|

|||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|

| This section lists properties only set by user | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

| View table in csv format |

| Level: COMPLIANCE | ||

| Check | Agent | Result |

| Check properties on compliance | All | PASSED |

| Level: CORRECTNESS | ||

| Check | Agent | Result |

| Compare SM and HQ Inventory | All | PASSED |